UX Case Study

Translating a CEO-Level Hiring Methodology into a Scalable AI System

Transformed a proprietary Fortune 500 CEO hiring methodology into a scalable AI interview platform by designing conversational agents, implementing behavioral guidelines, and establishing structured evaluation systems.

Developed AI behavior aligned with executive standards, stabilized performance during infrastructure updates, and delivered reliable, decision-ready outputs trusted by recruiters at scale.

Role & Context

Company: Confidential AI Startup

Engagement: SeaLab, August 2024

Role: Lead Product Designer

Scope: AI-driven executive interview orchestration platform

Primary Users: Recruiters, Hiring Managers, Executive Leadership

Overview

A proprietary hiring practice used by Fortune 500 CEOs relied on:

Strict question discipline

Behavioral signal extraction

Structured scoring

High decision accountability

It worked because it was expert-driven.

The challenge:

Adapt this methodology into a scalable AI agent while maintaining its rigor, structure, and credibility.

The goal was not just to create a chatbot.

It was about building an AI that behaves like a trained executive interviewer.

The Problem

The early prototypes had some predictable problems:

Agents tended to ask questions that were too complex or combined several topics.

The prompts often strayed from the intended methodology.

Follow-up questions did not provide enough detail to accurately diagnose issues.

The outputs were wordy but did not help with decision-making.

Recruiters found it hard to compare candidates consistently.

The AI could hold a conversation, but it did not stay focused or follow guidelines.

The company needed to move from:

Generative AI conversation → Deterministic expert protocol execution

Product Architecture

Approached the solution by considering three main layers:

Conversational Agent Layer (candidate-facing)

Evaluation & Scoring Layer (recruiter-facing)

Prompt Governance & State Control Layer (system-facing)

Designing this solution involved both behavioral systems design and product design.

Candidate Experience

Visual Callout 1: Interview Flow Interface

On the interview screen, you will see:

Only one question appears at a time

Questions are grouped into clear chapters, such as Accomplishments, Lows, and Peers.

You can track your progress, for example, 12 out of 45 questions completed.

A sufficiency indicator indicates whether sufficient information has been provided.

The conversation follows a strict, single-threaded flow.

Why this is important

Executive interview methodology relies on:

Following a logical sequence

Managing cognitive load: Behavioral signal extraction

The user interface supports:

Deliberate responses instead of rushing

A structured approach rather than casual chatting

Maintaining focus instead of prioritizing friendliness

There is no unnecessary conversation and no shift in tone.

Recruiter Experience

Visual Callout 2: Candidate Dashboard

Recruiters need:

The ability to view multiple candidates at once

Access to structured metadata

Evaluation outputs that are easy to compare

Less room for interpretation bias

The dashboard provides:

A clear view of structured candidate attributes

Tracking for job applications and the evaluation stages

Consistency across all interview sessions

Focused on

Signal density instead of unnecessary UI decoration.

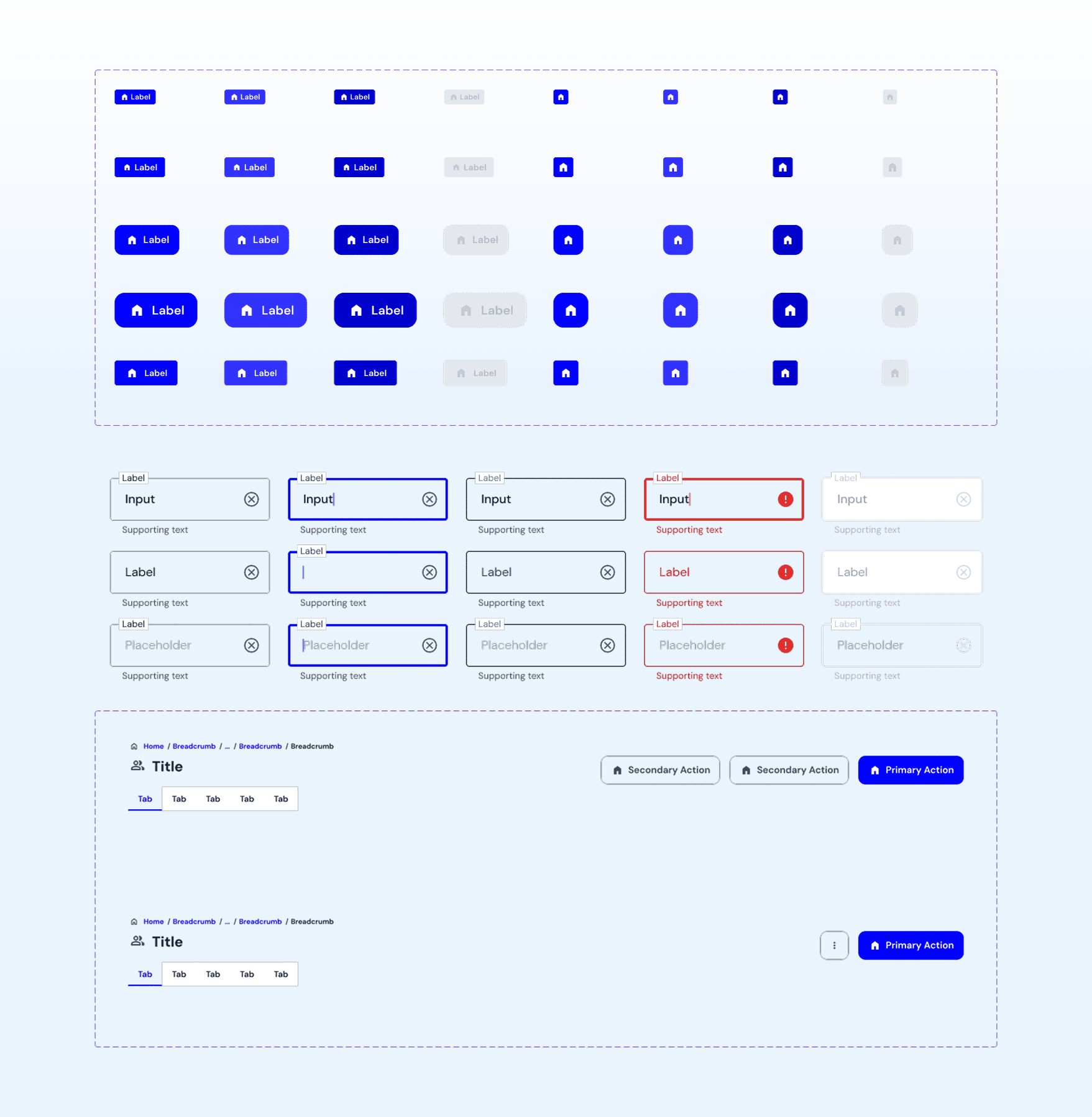

Strengthening System Cohesion: Rapid Design System Overhaul

Creating Behavioral Discipline in AI

Stakeholders insisted on zero tolerance for the following:

Hallucinations

Compound questions

Improvised follow-ups

Conversational padding

Tone inconsistency

My Approach: The Behavioral Guardrails Framework

Layer 1: Enforcing Structure

Single-question validation logic

Question taxonomy mapping

Required follow-up dependency enforcement

Conversation state management

Layer 2: Defining Voice and Tone

Executive-neutral tone

No affirmations (“Great answer”)

No filler language

No personality injection

Layer 3: Ensuring Consistent Outputs

Structured scoring outputs

Confidence scoring

Risk flags

Behavioral theme extraction

Turned unspoken executive instincts into clear, machine-based rules.

Mid-Project Infrastructure Disruption

At the halfway point of development:

A new engineering team joined the project.

The data schema was revised.

Introduced a new conversation state architecture.

A different prompt orchestration system was implemented.

As a result, all previous integrations stopped working.

My Response

I updated the prompt logic to fit the new schema.

I created modular prompt blocks that work independently from the user interface.

I designed diagrams to map out the conversation states.

I built fallback logic and added validation layers.

I introduced evaluation outputs that match the schema.

As a result,

AI behavior became more stable, even when the infrastructure was unpredictable.

Impact and Outcomes

Reduced conversational drift to near zero

Eliminated compound questions

Increased recruiter trust in AI outputs

Improved cross-candidate comparability

Reduced manual note-taking overhead

Stabilized performance during infra migration

Achieved full visual and interaction cohesion across the AI platform

The AI now acts and appears like a disciplined executive interviewer.